Will AI-Generated Images Replace Stock Photography in Marketing?

Steve Richards Creative That Sells, Trends and POVOn November 2, 2023, Collins Dictionary announced that the word of the year was “AI.” Even though Artificial Intelligence (AI) is technically two words, this year has proven that AI is going to be talked about for the foreseeable future.

Last year, DMW’s Justin Stauffer wrote about 5 Ways Generative A.I. Can Support Your Medicare Marketing Efforts. Justin broke down how ChatGPT (an AI bot streamlined for text generation) can assist marketers — finding and organizing information, streamlining copy through prompts, and creating images by simply telling the machine what you want to see. Justin notes, “AI can generate creative images, but arguably there are limitations compared to a human artist.” It’s been about a year since that article, and the landscape has changed drastically, closing the gap between human artists and machine-generated images.

How does AI image creation work?

There are so many good resources that explain exactly how AI works. For now, let’s focus on how images are created using textual input. I could tell you about neural networks, but instead I’ll explain it in terms of ordering at a restaurant.

Imagine you’re looking at the world’s largest menu — The Cheesecake Factory, obviously. You don’t know exactly what you want, but you know you have a taste for something. You tell the server you want something hearty, spicy, and creamy. The server, who knows the menu inside and out, can come up with a recommendation based on the prompts you provided.

Alternatively, if you’re hyper specific — you want linguine, sub red sauce for white, sub chicken for shrimp — the result of the “output” (i.e., recommendation) is better because the quality of your “prompts” was more targeted. In both cases, the server is giving your prompts to the kitchen, and the kitchen is creating your “output” based on the information it received from the server.

AI is a very powerful tool, but it needs guidance. And the more in-depth that guidance is, the better the image will be.

The quality of AI vs. stock photography today

AI works, but how does it stack up against the tried-and-true method of spending hours looking for (and eventually finding) the perfect image on a stock photography website?

It’s this designer’s belief that the future will belong to artists who can use AI as a tool, not a crutch.

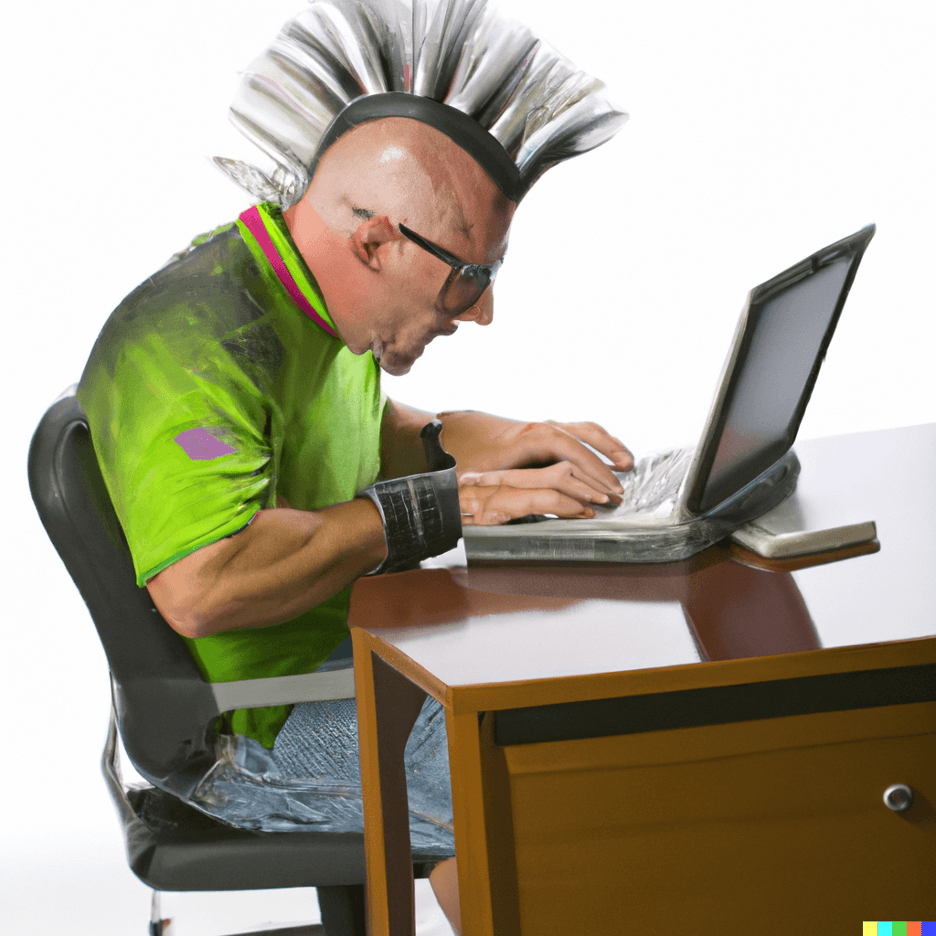

Back in March 2023, Justin Stauffer used Dall-E2 to produce this image of “middle-aged man with a mohawk, working on a computer.”

Justin Stauffer’s original attempt at a DALL-E 2 Stock photo example, published March 19, 2023.

As you can see from the example above, Mr. Hawk here was obviously “Frankensteined” as Justin put it.

The examples below look much more human, but the mohawks are obvious wigs and both men’s hands resemble lobster claws. (AI — and I can’t stress this enough — doesn’t know what human hands look like.) Keep in mind that when you read this, this information is probably already outdated.

Using the same prompt Justin used a year ago, you can see the jump in quality using Adobe’s new AI Generation Tools.

I decided to run another test and use search prompts that we’ve used here at DMW for client work.

As you can see, Adobe’s AI is quite capable of creating images that would likely fool most people. But upon close inspection, you’ll see some alarming issues.

Elderly couple, talking over forms, sitting at a table, with a laptop open.

Example 1 looks totally normal until you realize the woman has no eyes and her forearm is oddly interacting with the notebook. The man’s hands aren’t quite right, and the laptop is floating on top of the calculator.

Older man in blue shirt, smiling, getting a checkup by a doctor, studio lighting.

Example 2 is nearly perfect, except for the stethoscope not wanting to exist in the reality that was created for it (for lack of a better way to explain it).

Example 3 suffers from stethoscope issues, as well, and took my prompt of “studio lighting” too seriously.

Medicare-age couple, happy, walking dog through a park on a sunny day, portrait style, natural lighting.

Examples 4 and 5 are nightmare creations, and I can never unsee them. (Sorry.) But the dogs are mostly correct.

There is much room for improvement. Especially when it comes to how things interact with each other: How things exist in the same space, whether it’s a laptop and calculator, holding hands … a hand holding anything … or even just a hand.

All things considered, the advancements in these images are wild compared to the images Justin created a year ago, which makes me wonder, how will AI images look in another 6 months? In a year? 10 years? The power of AI is being unleashed and the sky is the limit.

The cost of artificial intelligence

It’s this designer’s belief that the future will belong to artists who can use AI as a tool, not a crutch. Using prompts and their outputs to create concepts, mood boards, and spec art are huge time savers.

Sometimes, communicating the “feel” of something to a client will also help speed up approvals and cut down on the instances of the dreaded response: “I’m just not seeing it.” Using AI, you can easily mock-up multiple “flavors” of ideas in a fraction of the time it would take to add placeholder images from a stock site.

Most offerings like DALL-E, Midjourney, Getty Generative AI, and Adobe Firefly are affordably priced — reflecting how this service is trying to find its seat at the table. However, this new technology may not be low cost for long, as persistent improvements are being made to prompts and outputs alike.

Are artists in trouble?

While AI is on the rise, there’s very little it can do without guidance. AI still has a lot it needs to learn from us. There’s still so much humanity that needs to be programmed into machine learning — and only people can do that. As Forbes put it in 2022, “By empowering anybody to become ‘armchair’ data scientists and engineers, the power and utility of AI will become within reach for us all.”

Will extended tweaking and adjustment by the varied mass of mankind make AI have a humanizing effect on AI? We’ll need to wait and see. Humans are inherently empathetic — we know the human condition because we experience it every day.

Our prompts are our collective experiences, and our outputs are how we view the world around us. Plus, we also know what our own hands look like.